There's a peculiar species of delusion unique to founders building in AI. It goes something like this: you see a massive industry doing something expensive and slow. You see a new technology doing something cheap and fast. You draw the obvious line between the two points and convince yourself you've discovered arbitrage.

What you've actually discovered is the setup for a punchline the market is about to deliver at your expense.

This pattern is everywhere right now. Delve, a YC-backed compliance startup, raised $32 million at a $300 million valuation to automate SOC 2 and HIPAA certifications with "agentic AI." Compliance in days instead of months. By January 2026, over 1,000 customers. By March, a whistleblower investigation emerged alleging that Delve had been generating near-identical audit reports across hundreds of clients, with pre-written conclusions no auditor had reviewed. The accusation: they hadn't automated compliance. They'd automated the appearance of it. Insight Partners quietly scrubbed their investment thesis from the internet.

The two-lines-on-a-napkin thesis is seductive because it's not wrong in the abstract. Compliance really is expensive. Fashion photography really is inefficient. The problem isn't the observation. It's the catastrophic underestimation of why the "expensive and slow" industry is expensive and slow in the first place. Usually: because the details are brutally hard, and the details are the product.

The thesis that wrote itself

I built twomore because the thesis was, on paper, bulletproof.

AI-generated images had quietly crossed the threshold from "impressive tech demo" to "indistinguishable from reality if you don't zoom in too hard." The melting faces and seven-fingered hands of 2023 had given way to images you'd scroll past on Instagram without a second thought.

Fashion, meanwhile, remained stubbornly analogue in its content creation. Brands were still booking studios, hiring photographers, coordinating models, and spending five figures on shoots that produced a handful of usable images. The inefficiency was almost offensive.

Zara had already started experimenting with AI campaigns. H&M was creating digital twins of thirty real models, licensing their likenesses to generate marketing content without requiring anyone to actually stand in front of a camera.

The writing wasn't just on the wall. It was on the wall, the ceiling, and the floor, in neon.

The generation models were improving at a pace that made planning six months ahead feel like an act of faith. With the right tools and careful prompting, you could produce results that genuinely narrowed the gap between synthetic and real.

Not perfectly, not yet, but enough to make you believe the remaining distance was just engineering.

The dopamine of building

I should mention that I'm not an engineer by training. I started in design. The kind where you're sketching layouts and obsessing over typography, not writing functions. Marketing came next, because it turns out that making beautiful things nobody sees is a hobby, not a career. I needed to get my work in front of people, so I learned how to promote it. And promoting it meant building websites, which meant learning to code, which meant I'd gone from picking fonts to picking frameworks in the span of a few years without ever consciously deciding to become technical.

When I first taught myself to code, the process looked like what it looked like for everyone who came up that way: hours on Stack Overflow hunting for the one answer that matched your exact error message, YouTube tutorials you'd watch three times before the concept stuck, weeks of practice before you could apply anything to a real project. You'd spend an entire afternoon debugging a CSS layout issue that turned out to be a missing semicolon. That was the tax you paid for building things without a computer science degree, and you paid it gladly because the alternative was not building at all.

Then, almost overnight, the tax disappeared.

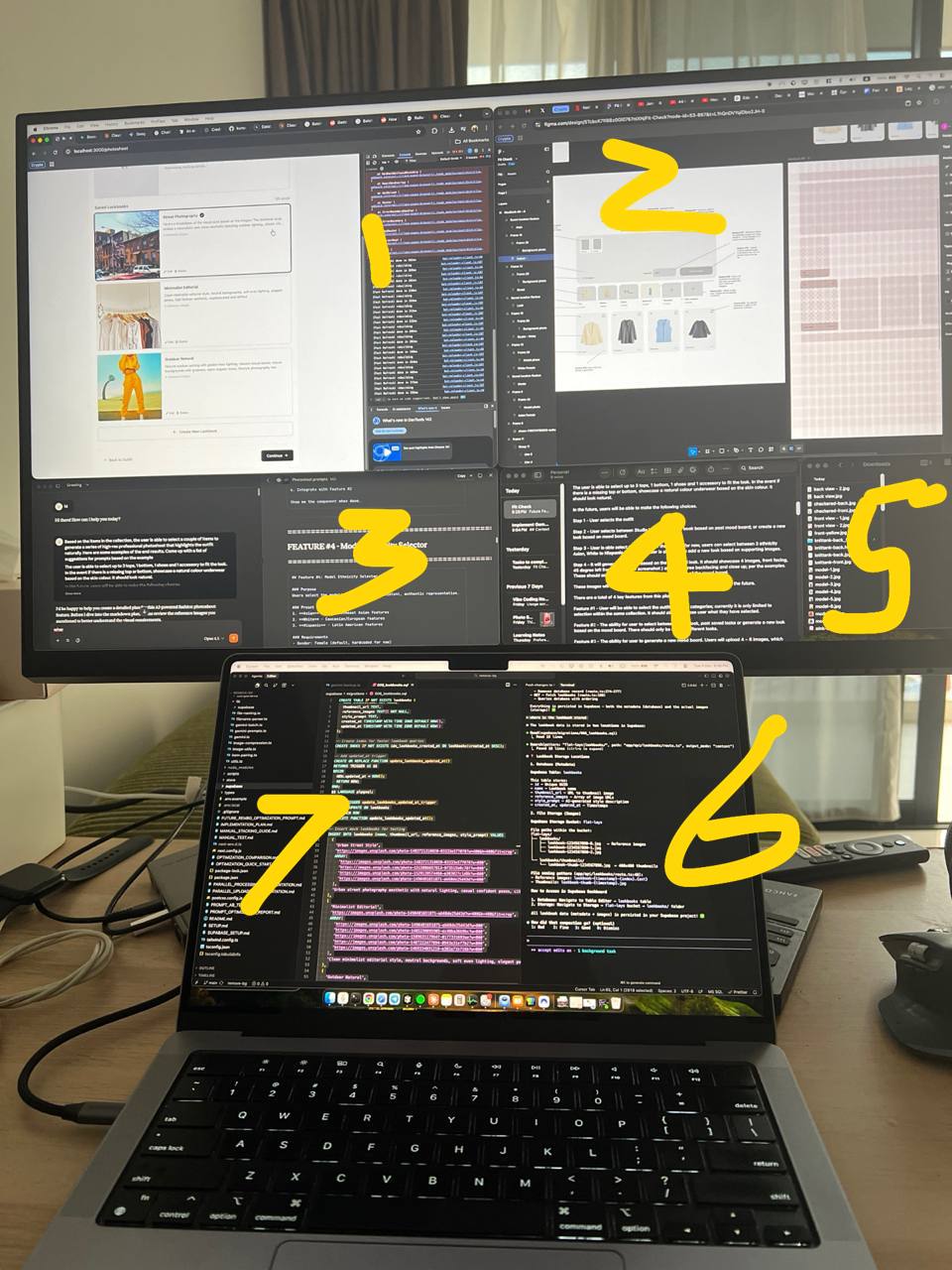

Picture this: multiple terminals running simultaneously, each one autonomously constructing different features, AI agents orchestrating the work like some silicon symphony conductor. You'd sit back with your coffee and watch your product assemble itself across six screens. Problems that would have cost me a full day on Stack Overflow were solved in seconds. Features that would have taken weeks of self-study to even attempt were shipping before lunch. It was the closest thing I've experienced to a cheat code for building software.

Claude Code, which had started its life as a fragmented multi-terminal affair requiring separate windows for planning, building, and testing, had evolved into a single monolithic beast that could hold a million tokens of context and execute the full development cycle without blinking. The infrastructure for building AI products was improving almost as fast as the AI products themselves.

The opportunity seemed irrefutable: massive market, improving technology, incumbents asleep at the wheel, and tools that let you build at superhuman speed.

What could possibly go wrong?

The devil in the drape

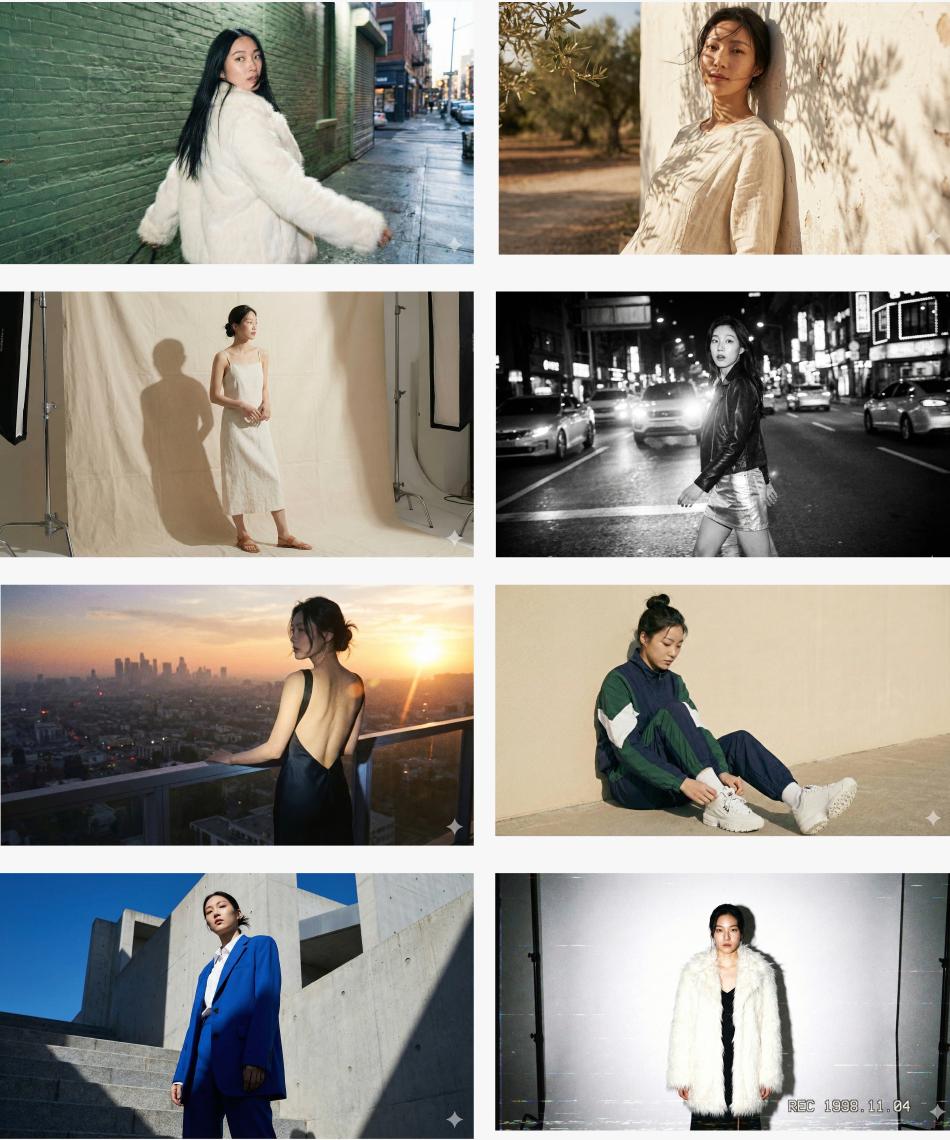

The competitors in this space are all doing roughly the same thing. Upload a garment, select an AI model, receive a photo. For about 80% of use cases, the output is perfectly acceptable. Not extraordinary, not embarrassing, just competent. The e-commerce equivalent of a firm handshake.

The problem is the remaining 20%. And that's where every dollar of actual differentiation lives, and where AI goes to die.

A single fashion photograph is a staggeringly complex object when you bother to deconstruct it. That complexity is precisely what makes traditional photography expensive, and precisely what AI cannot yet replicate.

There's the model. The mood: lighting, makeup, the overall atmosphere that whispers "luxury" or screams "streetwear." The location, even when the location is just a different shade of white wall.

The pose: standing, sitting, turning, the particular angle of a hip that separates editorial from catalogue.

Then the outfit itself. A top alone fractures into fitted tees, wrap blouses, off-shoulder cuts, each with its own drape and fit requirements. Then bottoms, outerwear, accessories, and within each, a labyrinth of details that matter to anyone who actually sells clothes for a living. Is the shirt tucked? Half-tucked? Is the inner layer visible? What's the drape? How do the proportions shift when you swap a midi skirt for wide-leg trousers?

This also conveniently excludes outdoor settings, which introduce so many additional variables they might as well exist in a parallel dimension.

You'll find no shortage of content creators on social media demonstrating how trivially easy it is to generate fashion imagery with tools like Gemini. They're performing a very specific magic trick.

What they're showing you is either a loose concept where the AI fills in whatever approximation it fancies and nobody examines the output too closely, or a reproduction of an existing reference image where the model essentially performs a sophisticated copy-paste with a face swap. And if you look a little closer, most of these creators aren't actually building products. They're selling courses, teaching other people how to draw the same obvious line between "expensive" and "AI" and convincing them there's gold at the intersection.

Matthew Drinkwater, who runs innovation at the London College of Fashion, said something that stuck with me: AI won't replace designers, but it will elevate what they can do. And he's right, as far as it goes. The technology is exceptional at speed, at iteration, at producing variations. But fashion photography isn't a variation problem. It's a vision problem. A stylist knows why a particular belt transforms an outfit. A photographer understands that the difference between a good shot and a great one is three degrees of chin angle and the shadow it casts on a collarbone.

And there's a catch. Researchers at UC Berkeley found that when humans use AI as a creative partner, idea diversity collapses. Both humans and AI gravitate toward the statistically "likely" answer, and everyone ends up in the same neighbourhood. The human hand, the imperfect and sometimes irrational choices of a creative director, is what keeps fashion content from feeling like it rolled off an assembly line.

The trilemma of who pays

The cruelest discovery was not technical but commercial, and it took the form of a trilemma so clean it humbled every assumption I'd carried in from the outside.

On the left of the spectrum sit the brands who genuinely do not care. They pull images from their suppliers or snap something on an iPhone and list it. The product is the product. Visual polish is overhead, and overhead is the enemy.

These people are not your customers. They would not be your customers if you gave the product away for free, because the act of using it implies a belief they don't hold: that presentation matters.

On the right sit the premium brands, the ones with ongoing relationships with real models who appear in their photoshoots, their live videos, their Instagram stories, their TikToks. They've amortised the cost of physical production across enough channels that AI doesn't save them money.

It costs them something far more valuable: authenticity. A brand caught using AI-generated models risks the kind of consumer backlash that turns a cost-saving exercise into a reputation crisis. Levi's floated the idea of AI-generated models for diversity and had to quietly shelve the plan. H&M's digital twin initiative drew mixed reactions, with the first model to see her AI replica describing the experience as both "exciting and unsettling."

And in the middle? The curious ones, the experimenters, the brands open to AI and willing to try new tools? They exist, certainly. But they also have access to Gemini, to ChatGPT, to every general-purpose AI model that offers image generation as one feature among dozens.

Why pay for a specialised fashion photography tool when twenty dollars a month buys you a Swiss Army knife that also writes your ad copy and answers your customer service emails?

The brands that need the most help with content are either philosophically opposed to paying for it, commercially immune to your value proposition, or already served by tools that cost less and do more.

This, for those keeping score, is what business school professors call a "challenging market dynamic" and what founders call "oh no."

When the platform swallows the feature

If the brand spectrum wasn't sobering enough, the platform dynamics made it worse.

Google's Doppl app is shutting down on April 30, 2026, less than ten months after launch. Doppl was a consumer fashion experiment: upload a photo of yourself, feed it images of any outfit, and the AI generates a realistic image of you wearing those clothes. TIME Magazine called it one of the Best Inventions of 2025.

Twomore and Doppl solve different problems. Doppl was a consumer vanity mirror. twomore is a B2B photography studio.

But the autopsy reveals the same cause of death: platformisation. Google didn't shut Doppl down because the technology failed. They shut it down because the technology worked well enough to fold into Google Shopping and image search results. Why maintain a standalone app when the same capability can be a feature inside something a billion people already use?

Here's where it gets properly uncomfortable for anyone building in this space.

Just weeks before announcing Doppl's shutdown, Google launched Photoshoot inside Pomelli, their free AI marketing tool for small businesses. Pomelli analyses your brand's website, extracts your visual identity, and generates on-brand marketing assets. Photoshoot, specifically, takes a product image and produces studio-quality photography using Google's Nano Banana model. It works for jewelry, for coffee, for yoga studios. It works for fashion.

And it costs nothing.

Now, Google may eventually charge for this. They usually do. But it'll be bundled into a broader ecosystem the same way Google Workspace bundles Docs, Sheets, and Drive into a single subscription. The brands in the middle of the spectrum will pay for a suite that handles everything from marketing assets to product photography. Not because any individual feature is best-in-class, but because the bundle is convenient and the price is right.

That's the competitive landscape for a vertical AI fashion tool: you're not competing against other startups. You're competing against a rounding error in Google's feature set.

Building on borrowed ground

When your entire product sits on top of frontier models you don't own, you're essentially a tenant farmer. You work the land, you plant the seeds, you pray for good weather. But the landlord can raise the rent, flood the field, or sell the property to a developer whenever the mood strikes.

In practical terms, this means rate limits that throttle your users during peak hours. Downtime that's entirely outside your control. Cost structures that shift without warning. Quality degradation when the service is under heavy load.

Every one of these hits the user experience directly, and not one of them can be engineered around. You can build the most elegant workflow, the most intuitive interface, the most fashion-savvy prompt architecture in the world, and it all falls apart when the model provider has a bad Tuesday.

Shouldn't I have known this from the start? I did. Every founder building on top of frontier models knows the moat is thin. But the two-lines-on-a-napkin thesis has a way of overriding your better judgment. You tell yourself that focusing on a specific niche, understanding the domain deeply, building the workflow that general-purpose tools can't match, these things will create enough distance. And the dopamine of building, watching those terminals hum, seeing features materialise faster than you've ever shipped anything in your life, that makes the risk feel manageable.

The skills are real. Building with AI agents, designing prompt architectures, orchestrating complex workflows, none of that disappears when the product thesis doesn't hold. But six terminals running simultaneously is impressive in the same way a treadmill is impressive: a lot of motion, not necessarily a lot of distance. The question is whether you're building fast or building the right thing, and the speed itself makes it very hard to tell the difference.

There's a story that Hamilton Mann shared about a company that rolled out GenAI across 2,800 employees. On paper, it was a success. More output, faster turnaround, dashboards lighting up green. But when you looked closer, the quarterly reviews had gotten prettier without getting smarter. Beautiful slides, thin judgment. The skills that actually mattered, asking the hard questions, reframing problems, connecting dots across teams, those quietly atrophied. People stopped leading and started curating. The tool set the pace and humans followed.

I recognise that pattern more than I'd like to admit. The idea was always to build fast, get something into users' hands, and let their behaviour tell us what to double down on. Ship, learn, iterate. But the speed of building created its own gravity. When features materialise before lunch, the temptation is to keep shipping rather than pause and ask the uncomfortable question: does any of this actually solve a problem someone will pay for?

And here's the thing that kept surfacing no matter how fast we shipped: regardless of whether you use a real model or an AI-generated one, the photoshoot itself is still fundamentally a creative act. The model is one variable. The lighting, the styling, the mood, the composition, the storytelling through clothing — those are the variables that make the image work. Unless you solve those dimensions consistently, the model is just one piece of a puzzle with too many missing parts.

No amount of shipping speed changes that.

The convergence

The market is converging, and it's converging toward mediocrity. That sounds harsh, but I mean it precisely: the future belongs to models that are good enough at everything, not perfect at anything.

Google's Gemini already handles fashion imagery alongside everything from code generation to document analysis. Most users, faced with the choice between a $20/month tool that handles all of the above and a $50/month tool that handles fashion photography slightly better, will choose the Swiss Army knife every time.

Not because they don't appreciate quality, but because the marginal improvement doesn't justify the marginal cost.

Is there room for specialised AI fashion products? Possibly. But the window is the width of a belt buckle and closing fast. You need to be meaningfully, demonstrably, can't-ignore-it better than the general-purpose alternative, for a customer segment large enough to sustain a business and willing enough to pay for the difference.

But here's a contrarian thought worth sitting with: in a world where mediocre companies are all using the same AI tools to generate the same competent-but-forgettable content, perhaps authenticity becomes the ultimate differentiator.

Think about what happened to email. Inboxes are now flooded with AI-generated sales outreach, personalised at scale but personal to nobody. The response? The most effective salespeople have gone back to face-to-face meetings, handwritten notes, in-person events. The human touch became the signal precisely because everything else became noise.

Fashion may follow the same arc. When every mid-tier brand is using AI to produce identical-looking product photography, the brands that invest in real shoots, real stylists, real creative direction will stand out not despite the expense but because of it. The imperfection becomes the proof of authenticity. The cost becomes the moat.

WGSN's trend forecasters are already seeing this play out on the runways. Prada showed intentional abrasions in their Fall 2026 collection. Ashlyn showcased raw-edge tailoring with visible topstitching. WGSN calls it the "anti-AI aesthetic": designers deliberately revealing the human hand behind their work. In a market flooded with sameness, visible creativity and genuine cultural exchange are becoming fashion's most powerful currency.

What the market taught me

Building twomore taught me something that I suspect every founder in the AI application layer will eventually learn: the opportunity was real, the market signals were genuine, the technology was impressive, and none of that was sufficient.

The gap between "this should work" and "this does work, for enough people, sustainably" is not a gap you can close with better engineering. It's a gap that exists in the market itself, in the messy, irrational, deeply human decisions that brands make about how they want to present themselves to the world.

But the experience is far from wasted. The ability to build and ship with AI agents, to orchestrate complex systems, to move from idea to working product in days instead of months, that skill is genuinely valuable and will only become more so. What I've learned to be more honest about is where that speed creates real value and where it creates the illusion of progress.

There's an old line from Robert Solow that keeps aging well: "You can see the computer age everywhere but in the productivity statistics." Swap "computer" for "AI" and you have 2026. Despite hundreds of S&P 500 companies claiming positive AI adoption, 90% of managers in a recent survey reported no measurable impact on productivity. The tools are real. The speed is real. But speed without direction is just expensive motion.

What I've taken from this is not that the tools are the problem. It's that six terminals running simultaneously is not a substitute for sitting quietly with a notebook and thinking hard about whether your customers actually want what you're building. The first is satisfying. The second is productive. Learning to tell the difference might be the most important skill a founder can develop right now.

I'm still building. The instinct that pulled me toward twomore, seeing a gap and wanting to close it, that hasn't changed. What's changed is how I evaluate which gaps are worth closing, and how honest I am with myself about the distance between a compelling thesis and a viable business.

The napkin math will always look good. The question is what you find when you flip the napkin over.